Picture this: It's the 1960 Olympics, and two runners cross the finish line in what looks like a dead heat. The crowd holds its breath. The winner? Whoever the head timekeeper thinks crossed first, based on his personal reaction time and a mechanical stopwatch that measures to the nearest tenth of a second. No slow-motion replay. No photo evidence. Just human judgment deciding Olympic gold.

For the better part of a century, this was how track and field worked. And it was a disaster waiting to happen.

When Humans Were the Technology

Before electronic timing became standard in the 1970s, track meets relied entirely on human officials armed with mechanical stopwatches. These timekeepers would start their watches when they saw the flash from the starter's pistol and stop them when runners crossed the finish line. The problems were obvious, even then.

First, there was reaction time. Even the most alert official needed roughly two-tenths of a second to process what they were seeing and press the stopwatch button. That delay was inconsistent and unpredictable. Some timekeepers were naturally faster than others. Some had better eyesight. Some got distracted by the crowd.

Then there was the finish line itself. In close races, determining exactly when a runner's torso crossed the line required split-second visual judgment. Officials were essentially making educated guesses about events happening faster than the human eye could reliably track.

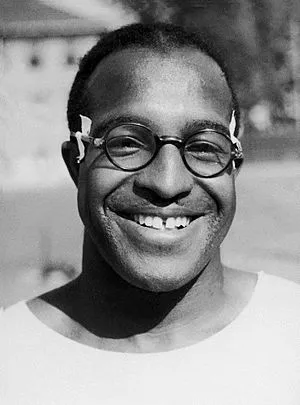

The 1932 Olympics in Los Angeles highlighted these problems perfectly. In the men's 100-meter final, Eddie Tolan and Ralph Metcalfe appeared to finish simultaneously. The timekeepers recorded identical times, but officials awarded Tolan the gold based on their collective "best judgment" of who crossed first. Metcalfe spent the rest of his life convinced he'd been robbed.

Photo: Eddie Tolan, via kids.kiddle.co

Photo: Eddie Tolan, via kids.kiddle.co

The Margin of Error Was Massive

Manual timing wasn't just unreliable—it was systematically wrong. Studies later showed that hand-timed races were typically 0.15 to 0.25 seconds faster than electronically timed races over the same distance. This happened because timekeepers usually started their watches slightly late (reacting to the visual flash rather than the actual gunshot) but stopped them at roughly the right moment.

That quarter-second difference might not sound like much, but in sprinting, it's enormous. In today's 100-meter dash, 0.2 seconds separates the world record holder from someone who wouldn't qualify for their high school team.

Consider what this meant for the record books. Jesse Owens' legendary 10.3-second 100-meter run in 1936? It was probably closer to 10.5 seconds by today's standards. Still impressive, but nowhere near as superhuman as it seemed. Entire generations of "world records" were built on systematically inaccurate measurements.

Photo: Jesse Owens, via res.cloudinary.com

Photo: Jesse Owens, via res.cloudinary.com

When Technology Finally Showed Up

The transition to electronic timing began in the 1960s but wasn't universal until much later. Early electronic systems used a microphone to detect the starter's pistol and photoelectric cells at the finish line to sense when runners broke the beam. Suddenly, timing was accurate to the hundredth of a second instead of human reaction time.

Photo finish cameras, introduced around the same time, revolutionized close races. Instead of relying on officials' eyes, a high-speed camera captured the exact moment each runner crossed the line. The famous photo finish became the final word in settling disputes.

But even this technology had growing pains. The 1972 Olympics featured one of the most controversial races in history when the photo finish equipment malfunctioned during the men's 100-meter final. Officials had to fall back on backup hand timing, creating exactly the kind of confusion the new technology was supposed to eliminate.

Today's Impossibly Precise World

Modern track timing makes the old days look like the Stone Age. Today's systems measure to the thousandth of a second, though official records only go to hundredths because that's considered the limit of meaningful precision for human performance.

Laser systems detect the exact moment a runner's torso crosses the finish line. High-speed cameras capture thousands of frames per second. Wind gauges measure air resistance to the nearest tenth of a meter per second. Temperature and altitude are recorded because they affect performance. Even the starter's pistol is electronic, ensuring every runner hears the start signal at precisely the same moment.

The 2016 Olympics showcased just how precise things have become. In the women's 100-meter final, the gap between first and third place was 0.08 seconds—a margin that would have been impossible to measure accurately in the manual timing era. The photo finish showed clear separation between runners, but only because cameras were capturing the race at 10,000 frames per second.

The Records That Never Were

Looking back, it's stunning how many "official" results from the manual timing era were probably wrong. Not just slightly off, but potentially backwards. How many silver medalists actually won? How many world records were set by runners who officially finished second?

We'll never know, because those races happened in the brief window between the invention of competitive running and the invention of accurate timing. For roughly 80 years, the most important moments in track and field were decided by human guesswork dressed up as precision.

The next time you watch a photo finish decided by thousandths of a second, remember that for most of sports history, the same race would have been decided by whoever the timekeeper thought looked fastest. It's a reminder of just how recently we started measuring the things we claim to care most about.